2 Chapter 2 – Methods

Jennifer Walinga

Chapter Contents

Psychological Science

Psychologists study the behaviour of both humans and animals. The main purpose of this research is to help us understand people and to improve the quality of human lives. The results of psychological research are relevant to problems such as learning and memory, homelessness, psychological disorders, family instability, and aggressive behaviour and violence. Psychological research is used in a range of important areas, from public policy to driver safety. It guides court rulings with respect to racism and sexism (Brown v. Board of Education, 1954; Fiske, Bersoff, Borgida, Deaux, & Heilman, 1991), as well as court procedure, in the use of lie detectors during criminal trials, for example (Saxe, Dougherty, & Cross, 1985). Psychological research helps us understand how driver behaviour affects safety (Fajen & Warren, 2003), which methods of educating children are most effective (Alexander & Winne, 2006; Woolfolk-Hoy, 2005), how to best detect deception (DePaulo et al., 2003), and the causes of terrorism (Borum, 2004).

Some psychological research is basic research. Basic research is research that answers fundamental questions about behaviour. For instance, biopsychologists study how nerves conduct impulses from the receptors in the skin to the brain, and cognitive psychologists investigate how different types of studying influence memory for pictures and words. There is no particular reason to examine such things except to acquire a better knowledge of how these processes occur. Applied research is research that investigates issues that have implications for everyday life and provides solutions to everyday problems. Applied research has been conducted to study, among many other things, the most effective methods for reducing depression, the types of advertising campaigns that serve to reduce drug and alcohol abuse, the key predictors of managerial success in business, and the indicators of effective government programs.

Basic research and applied research inform each other, and advances in science occur more rapidly when each type of research is conducted (Lewin, 1999). For instance, although research concerning the role of practice on memory for lists of words is basic in orientation, the results could potentially be applied to help children learn to read. Correspondingly, psychologist-practitioners who wish to reduce the spread of AIDS or to promote volunteering frequently base their programs on the results of basic research. This basic AIDS or volunteering research is then applied to help change people’s attitudes and behaviours.

The results of psychological research are reported primarily in research articles published in scientific journals, and your instructor may require you to read some of these. The research reported in scientific journals has been evaluated, critiqued, and improved by scientists in the field through the process of peer review. In this book there are many citations of original research articles, and I encourage you to read those reports when you find a topic interesting. Most of these papers are readily available online through your college or university library. It is only by reading the original reports that you will really see how the research process works. A list of some of the most important journals in psychology is provided here for your information.

Psychological Journals

The following is a list of some of the most important journals in various sub-disciplines of psychology. The research articles in these journals are likely to be available in your college or university library. You should try to read the primary source material in these journals when you can.

General Psychology

- American Journal of Psychology

- American Psychologist

- Behavioral and Brain Sciences

- Canadian Journal of Behavioural Science

- Canadian Journal of Experimental Psychology

- Canadian Psychology

- Psychological Bulletin

- Psychological Methods

- Psychological Review

- Psychological Science

Biopsychology and Neuroscience

- Behavioral Neuroscience

- Journal of Comparative Psychology

- Psychophysiology

Clinical and Counselling Psychology

- Journal of Abnormal Psychology

- Journal of Consulting and Clinical Psychology

- Journal of Counselling Psychology

Cognitive Psychology

- Canadian Journal of Experimental Psychology

- Cognition

- Cognitive Psychology

- Journal of Memory and Language

- Perception & Psychophysics

Cross-Cultural, Personality, and Social Psychology

- Journal of Cross-Cultural Psychology

- Journal of Experimental Social Psychology

- Journal of Personality

- Journal of Personality and Social Psychology

- Personality and Social Psychology Bulletin

Developmental Psychology

- Child Development

- Developmental Psychology

Educational and School Psychology

- Educational Psychologist

- Journal of Educational Psychology

- Review of Educational Research

Environmental, Industrial, and Organizational Psychology

- Journal of Applied Psychology

- Organizational Behavior and Human Decision Processes

- Organizational Psychology

- Organizational Research Methods

- Personnel Psychology

2.1 Psychologists Use the Scientific Method to Guide Their Research

Learning Objectives

- Describe the principles of the scientific method and explain its importance in conducting and interpreting research.

- Differentiate laws from theories and explain how research hypotheses are developed and tested.

- Discuss the procedures that researchers use to ensure that their research with humans and with animals is ethical.

Psychologists aren’t the only people who seek to understand human behaviour and solve social problems. Philosophers, religious leaders, and politicians, among others, also strive to provide explanations for human behaviour. But psychologists believe that research is the best tool for understanding human beings and their relationships with others. Rather than accepting the claim of a philosopher that people do (or do not) have free will, a psychologist would collect data to empirically test whether or not people are able to actively control their own behaviour. Rather than accepting a politician’s contention that creating (or abandoning) a new centre for mental health will improve the lives of individuals in the inner city, a psychologist would empirically assess the effects of receiving mental health treatment on the quality of life of the recipients. The statements made by psychologists are empirical, which means they are based on systematic collection and analysis of data.

The Scientific Method

All scientists (whether they are physicists, chemists, biologists, sociologists, or psychologists) are engaged in the basic processes of collecting data and drawing conclusions about those data. The methods used by scientists have developed over many years and provide a common framework for developing, organizing, and sharing information. The scientific method is the set of assumptions, rules, and procedures scientists use to conduct research.

In addition to requiring that science be empirical, the scientific method demands that the procedures used be objective, or free from the personal bias or emotions of the scientist. The scientific method proscribes how scientists collect and analyze data, how they draw conclusions from data, and how they share data with others. These rules increase objectivity by placing data under the scrutiny of other scientists and even the public at large. Because data are reported objectively, other scientists know exactly how the scientist collected and analyzed the data. This means that they do not have to rely only on the scientist’s own interpretation of the data; they may draw their own, potentially different, conclusions.

Most new research is designed to replicate — that is, to repeat, add to, or modify — previous research findings. The scientific method therefore results in an accumulation of scientific knowledge through the reporting of research and the addition to and modification of these reported findings by other scientists.

Laws and Theories as Organizing Principles

One goal of research is to organize information into meaningful statements that can be applied in many situations. Principles that are so general as to apply to all situations in a given domain of inquiry are known as laws. There are well-known laws in the physical sciences, such as the law of gravity and the laws of thermodynamics, and there are some universally accepted laws in psychology, such as the law of effect and Weber’s law. But because laws are very general principles and their validity has already been well established, they are themselves rarely directly subjected to scientific test.

The next step down from laws in the hierarchy of organizing principles is theory. A theory is an integrated set of principles that explains and predicts many, but not all, observed relationships within a given domain of inquiry. One example of an important theory in psychology is the stage theory of cognitive development proposed by the Swiss psychologist Jean Piaget. The theory states that children pass through a series of cognitive stages as they grow, each of which must be mastered in succession before movement to the next cognitive stage can occur. This is an extremely useful theory in human development because it can be applied to many different content areas and can be tested in many different ways.

Good theories have four important characteristics. First, good theories are general, meaning they summarize many different outcomes. Second, they are parsimonious, meaning they provide the simplest possible account of those outcomes. The stage theory of cognitive development meets both of these requirements. It can account for developmental changes in behaviour across a wide variety of domains, and yet it does so parsimoniously — by hypothesizing a simple set of cognitive stages. Third, good theories provide ideas for future research. The stage theory of cognitive development has been applied not only to learning about cognitive skills, but also to the study of children’s moral (Kohlberg, 1966) and gender (Ruble & Martin, 1998) development.

Finally, good theories are falsifiable (Popper, 1959), which means the variables of interest can be adequately measured and the relationships between the variables that are predicted by the theory can be shown through research to be incorrect. The stage theory of cognitive development is falsifiable because the stages of cognitive reasoning can be measured and because if research discovers, for instance, that children learn new tasks before they have reached the cognitive stage hypothesized to be required for that task, then the theory will be shown to be incorrect.

No single theory is able to account for all behaviour in all cases. Rather, theories are each limited in that they make accurate predictions in some situations or for some people but not in other situations or for other people. As a result, there is a constant exchange between theory and data: existing theories are modified on the basis of collected data, and the new modified theories then make new predictions that are tested by new data, and so forth. When a better theory is found, it will replace the old one. This is part of the accumulation of scientific knowledge.

The Research Hypothesis

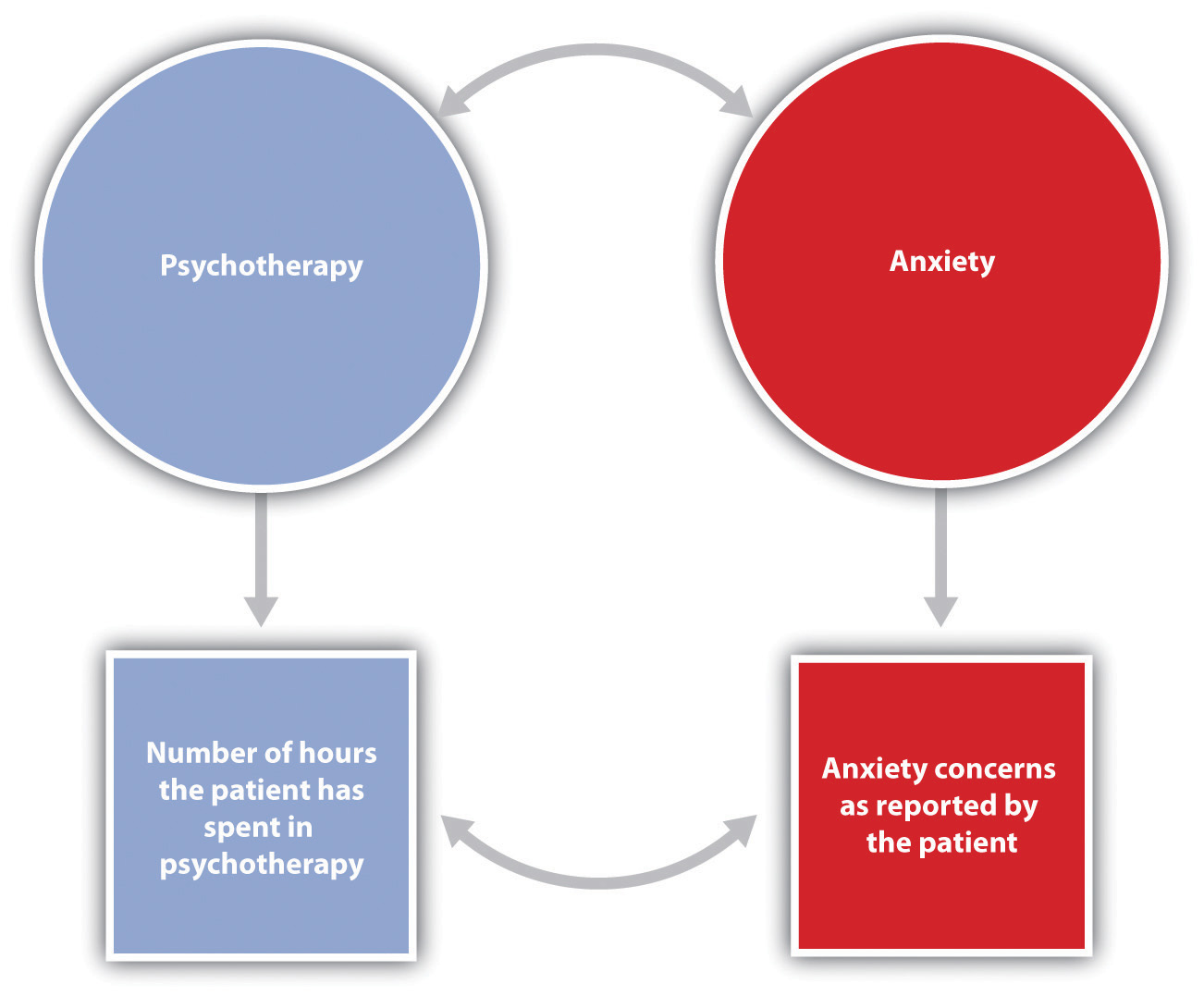

Theories are usually framed too broadly to be tested in a single experiment. Therefore, scientists use a more precise statement of the presumed relationship between specific parts of a theory — a research hypothesis — as the basis for their research. A research hypothesis is a specific and falsifiable prediction about the relationship between or among two or more variables, where a variable is any attribute that can assume different values among different people or across different times or places. The research hypothesis states the existence of a relationship between the variables of interest and the specific direction of that relationship. For instance, the research hypothesis “Using marijuana will reduce learning” predicts that there is a relationship between one variable, “using marijuana,” and another variable called “learning.” Similarly, in the research hypothesis “Participating in psychotherapy will reduce anxiety,” the variables that are expected to be related are “participating in psychotherapy” and “level of anxiety.”

When stated in an abstract manner, the ideas that form the basis of a research hypothesis are known as conceptual variables. Conceptual variables are abstract ideas that form the basis of research hypotheses. Sometimes the conceptual variables are rather simple — for instance, age, gender, or weight. In other cases the conceptual variables represent more complex ideas, such as anxiety, cognitive development, learning, self-esteem, or sexism.

The first step in testing a research hypothesis involves turning the conceptual variables into measured variables, which are variables consisting of numbers that represent the conceptual variables. For instance, the conceptual variable “participating in psychotherapy” could be represented as the measured variable “number of psychotherapy hours the patient has accrued,” and the conceptual variable “using marijuana” could be assessed by having the research participants rate, on a scale from 1 to 10, how often they use marijuana or by administering a blood test that measures the presence of the chemicals in marijuana.

Psychologists use the term operational definition to refer to a precise statement of how a conceptual variable is turned into a measured variable. The relationship between conceptual and measured variables in a research hypothesis is diagrammed in Figure 3.1. The conceptual variables are represented in circles at the top of the figure (Psychotherapy and anxiety), and the measured variables are represented in squares at the bottom (number of hours the patient has spent in psychotherapy and anxiety concerns as reported by the patient). The two vertical arrows, which lead from the conceptual variables to the measured variables, represent the operational definitions of the two variables. The arrows indicate the expectation that changes in the conceptual variables (psychotherapy and anxiety) will cause changes in the corresponding measured variables (number of hours in psychotherapy and reported anxiety concernts). The measured variables are then used to draw inferences about the conceptual variables.

Table 3.1 lists some potential operational definitions of conceptual variables that have been used in psychological research. As you read through this list, note that in contrast to the abstract conceptual variables, the measured variables are very specific. This specificity is important for two reasons. First, more specific definitions mean that there is less danger that the collected data will be misunderstood by others. Second, specific definitions will enable future researchers to replicate the research.

| Conceptual variable | Operational definitions |

|---|---|

| Aggression |

|

| Interpersonal attraction |

|

| Employee satisfaction |

|

| Decision-making skills |

|

| Depression |

|

2.2 Psychologists Use Descriptive, Correlational, and Experimental Research Designs to Understand Behaviour

Learning Objectives

- Differentiate the goals of descriptive, correlational, and experimental research designs and explain the advantages and disadvantages of each.

- Explain the goals of descriptive research and the statistical techniques used to interpret it.

- Summarize the uses of correlational research and describe why correlational research cannot be used to infer causality.

- Review the procedures of experimental research and explain how it can be used to draw causal inferences.

Psychologists agree that if their ideas and theories about human behaviour are to be taken seriously, they must be backed up by data. However, the research of different psychologists is designed with different goals in mind, and the different goals require different approaches. These varying approaches, summarized in Table 3.2, are known as research designs. A research design is the specific method a researcher uses to collect, analyze, and interpret data. Psychologists use three major types of research designs in their research, and each provides an essential avenue for scientific investigation. Descriptive research is research designed to provide a snapshot of the current state of affairs. Correlational research is research designed to discover relationships among variables and to allow the prediction of future events from present knowledge. Experimental research is research in which initial equivalence among research participants in more than one group is created, followed by a manipulation of a given experience for these groups and a measurement of the influence of the manipulation. Each of the three research designs varies according to its strengths and limitations, and it is important to understand how each differs.

| [Skip Table] | |||

| Research design | Goal | Advantages | Disadvantages |

|---|---|---|---|

| Descriptive | To create a snapshot of the current state of affairs | Provides a relatively complete picture of what is occurring at a given time. Allows the development of questions for further study. | Does not assess relationships among variables. May be unethical if participants do not know they are being observed. |

| Correlational | To assess the relationships between and among two or more variables | Allows testing of expected relationships between and among variables and the making of predictions. Can assess these relationships in everyday life events. | Cannot be used to draw inferences about the causal relationships between and among the variables. |

| Experimental | To assess the causal impact of one or more experimental manipulations on a dependent variable | Allows drawing of conclusions about the causal relationships among variables. | Cannot experimentally manipulate many important variables. May be expensive and time consuming. |

| Source: Stangor, 2011. | |||

Descriptive Research: Assessing the Current State of Affairs

Descriptive research is designed to create a snapshot of the current thoughts, feelings, or behaviour of individuals. This section reviews three types of descriptive research: case studies, surveys, and naturalistic observation (Figure 3.4).

Sometimes the data in a descriptive research project are based on only a small set of individuals, often only one person or a single small group. These research designs are known as case studies — descriptive records of one or more individual’s experiences and behaviour. Sometimes case studies involve ordinary individuals, as when developmental psychologist Jean Piaget used his observation of his own children to develop his stage theory of cognitive development. More frequently, case studies are conducted on individuals who have unusual or abnormal experiences or characteristics or who find themselves in particularly difficult or stressful situations. The assumption is that by carefully studying individuals who are socially marginal, who are experiencing unusual situations, or who are going through a difficult phase in their lives, we can learn something about human nature.

Sigmund Freud was a master of using the psychological difficulties of individuals to draw conclusions about basic psychological processes. Freud wrote case studies of some of his most interesting patients and used these careful examinations to develop his important theories of personality. One classic example is Freud’s description of “Little Hans,” a child whose fear of horses the psychoanalyst interpreted in terms of repressed sexual impulses and the Oedipus complex (Freud, 1909/1964).

Another well-known case study is Phineas Gage, a man whose thoughts and emotions were extensively studied by cognitive psychologists after a railroad spike was blasted through his skull in an accident. Although there are questions about the interpretation of this case study (Kotowicz, 2007), it did provide early evidence that the brain’s frontal lobe is involved in emotion and morality (Damasio et al., 2005). An interesting example of a case study in clinical psychology is described by Rokeach (1964), who investigated in detail the beliefs of and interactions among three patients with schizophrenia, all of whom were convinced they were Jesus Christ.

In other cases the data from descriptive research projects come in the form of a survey — a measure administered through either an interview or a written questionnaire to get a picture of the beliefs or behaviours of a sample of people of interest. The people chosen to participate in the research (known as the sample) are selected to be representative of all the people that the researcher wishes to know about (the population). In election polls, for instance, a sample is taken from the population of all “likely voters” in the upcoming elections.

The results of surveys may sometimes be rather mundane, such as “Nine out of 10 doctors prefer Tymenocin” or “The median income in the city of Hamilton is $46,712.” Yet other times (particularly in discussions of social behaviour), the results can be shocking: “More than 40,000 people are killed by gunfire in the United States every year” or “More than 60% of women between the ages of 50 and 60 suffer from depression.” Descriptive research is frequently used by psychologists to get an estimate of the prevalence (or incidence) of psychological disorders.

A final type of descriptive research — known as naturalistic observation — is research based on the observation of everyday events. For instance, a developmental psychologist who watches children on a playground and describes what they say to each other while they play is conducting descriptive research, as is a biopsychologist who observes animals in their natural habitats. One example of observational research involves a systematic procedure known as the strange situation, used to get a picture of how adults and young children interact. The data that are collected in the strange situation are systematically coded in a coding sheet such as that shown in Table 3.3.

| [Skip Table] | ||||

| Coder name: Olive | ||||

| This table represents a sample coding sheet from an episode of the “strange situation,” in which an infant (usually about one year old) is observed playing in a room with two adults — the child’s mother and a stranger. Each of the four coding categories is scored by the coder from 1 (the baby makes no effort to engage in the behaviour) to 7 (the baby makes a significant effort to engage in the behaviour). More information about the meaning of the coding can be found in Ainsworth, Blehar, Waters, and Wall (1978). | ||||

| Coding categories explained | ||||

| Proximity | The baby moves toward, grasps, or climbs on the adult. | |||

| Maintaining contact | The baby resists being put down by the adult by crying or trying to climb back up. | |||

| Resistance | The baby pushes, hits, or squirms to be put down from the adult’s arms. | |||

| Avoidance | The baby turns away or moves away from the adult. | |||

| Episode | Coding categories | |||

|---|---|---|---|---|

| Proximity | Contact | Resistance | Avoidance | |

| Mother and baby play alone | 1 | 1 | 1 | 1 |

| Mother puts baby down | 4 | 1 | 1 | 1 |

| Stranger enters room | 1 | 2 | 3 | 1 |

| Mother leaves room; stranger plays with baby | 1 | 3 | 1 | 1 |

| Mother re-enters, greets and may comfort baby, then leaves again | 4 | 2 | 1 | 2 |

| Stranger tries to play with baby | 1 | 3 | 1 | 1 |

| Mother re-enters and picks up baby | 6 | 6 | 1 | 2 |

| Source: Stang0r, 2011. | ||||

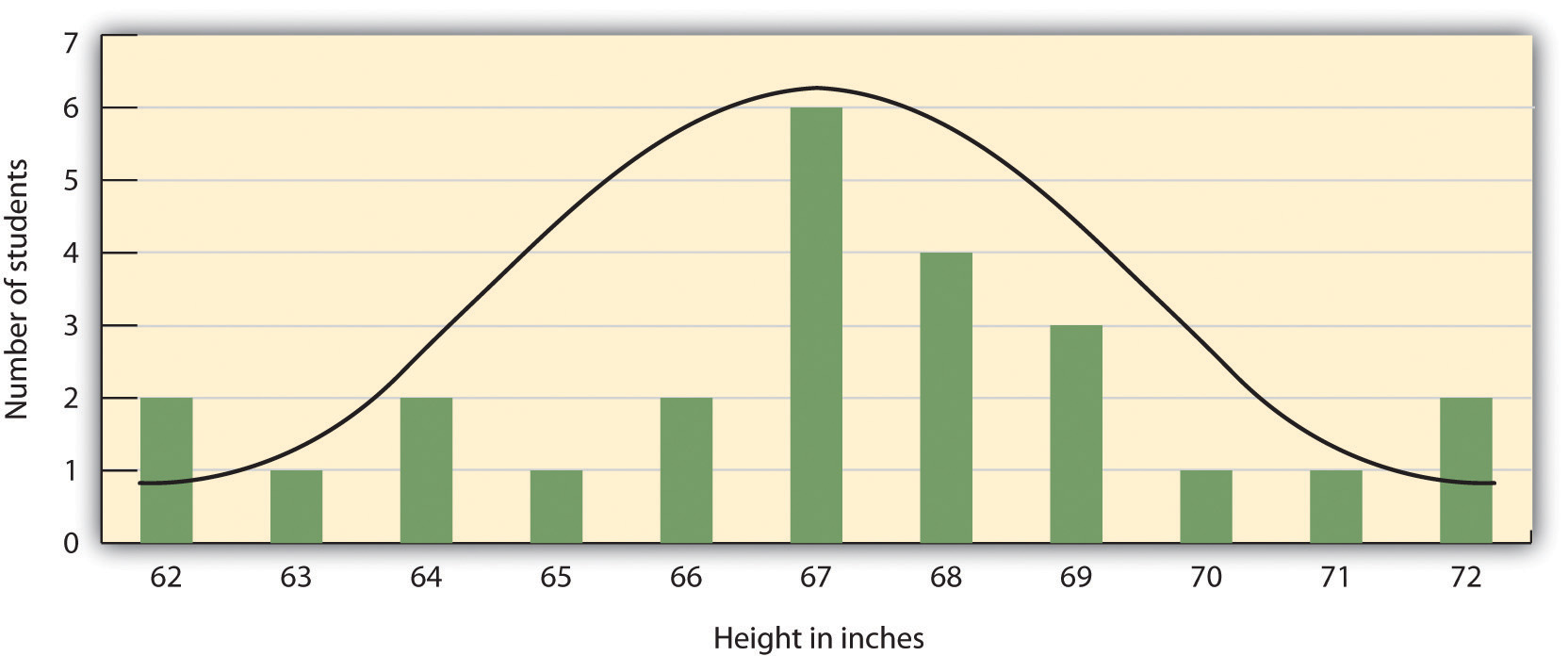

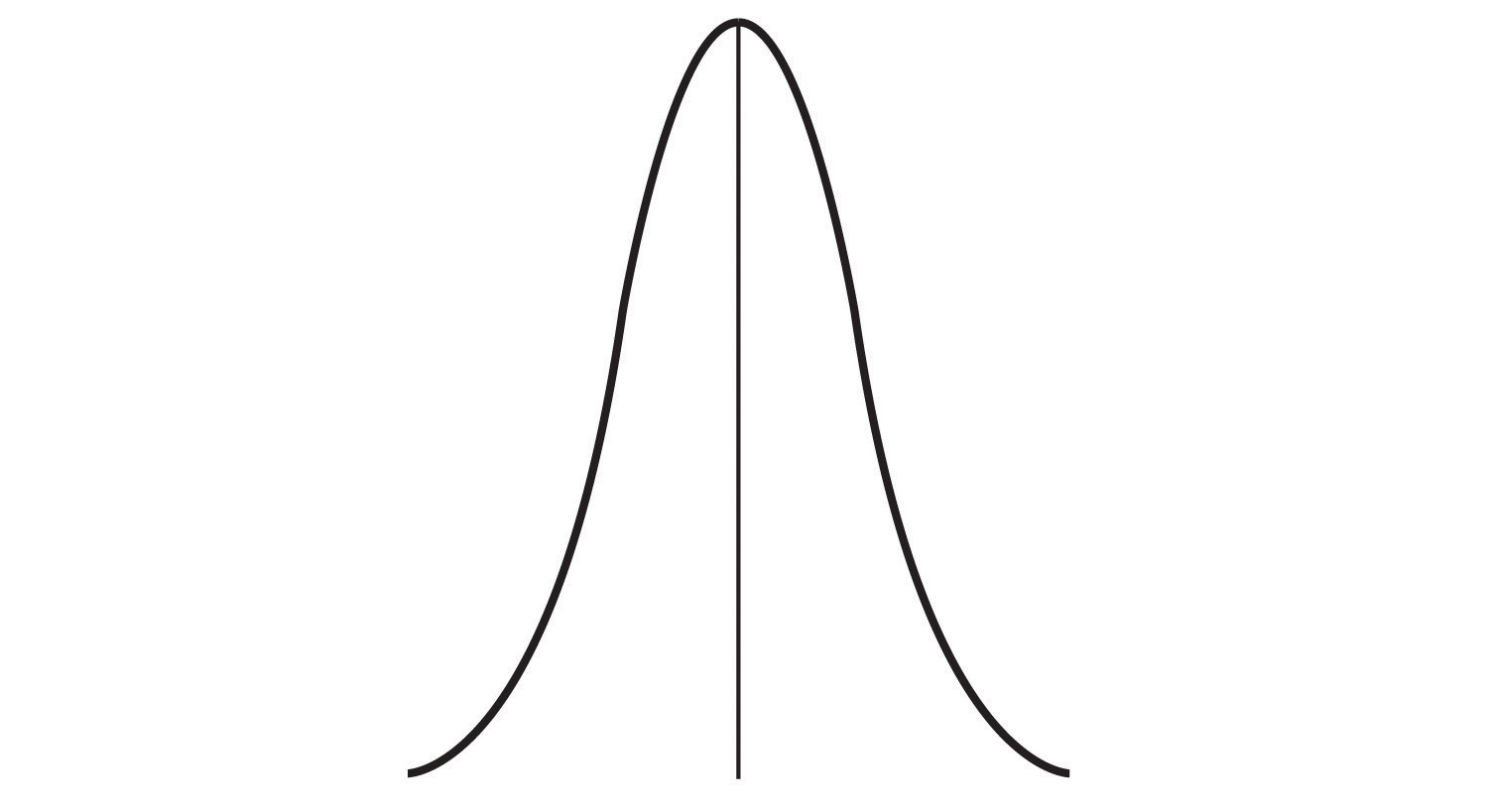

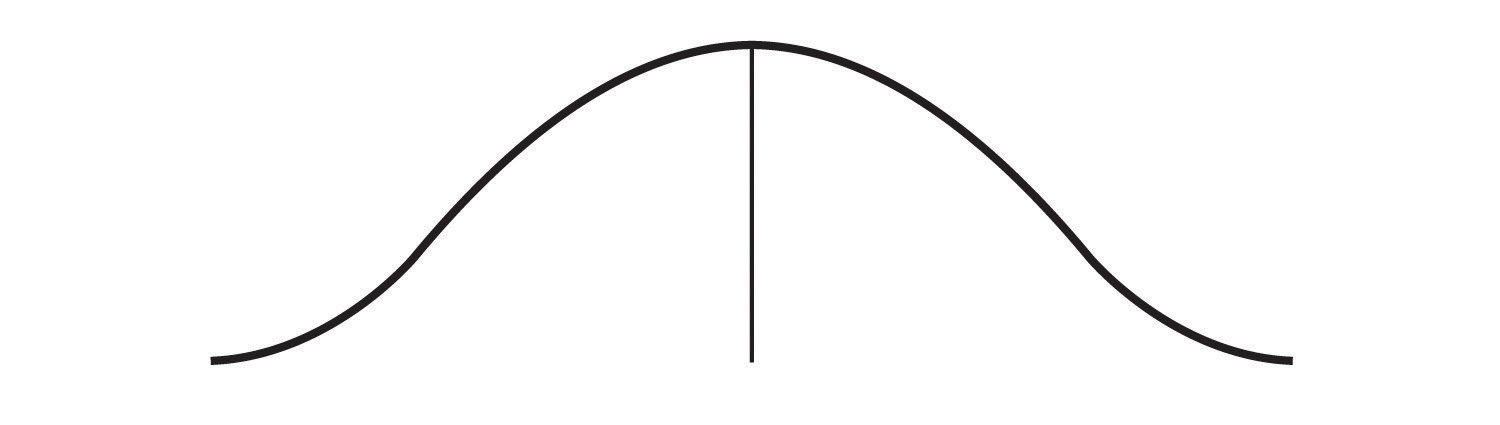

The results of descriptive research projects are analyzed using descriptive statistics — numbers that summarize the distribution of scores on a measured variable. Most variables have distributions similar to that shown in Figure 3.5 where most of the scores are located near the centre of the distribution, and the distribution is symmetrical and bell-shaped. A data distribution that is shaped like a bell is known as a normal distribution.

A distribution can be described in terms of its central tendency — that is, the point in the distribution around which the data are centred — and its dispersion, or spread. The arithmetic average, or arithmetic mean, symbolized by the letter M, is the most commonly used measure of central tendency. It is computed by calculating the sum of all the scores of the variable and dividing this sum by the number of participants in the distribution (denoted by the letter N). In the data presented in Figure 3.5 the mean height of the students is 67.12 inches (170.5 cm). The sample mean is usually indicated by the letter M.

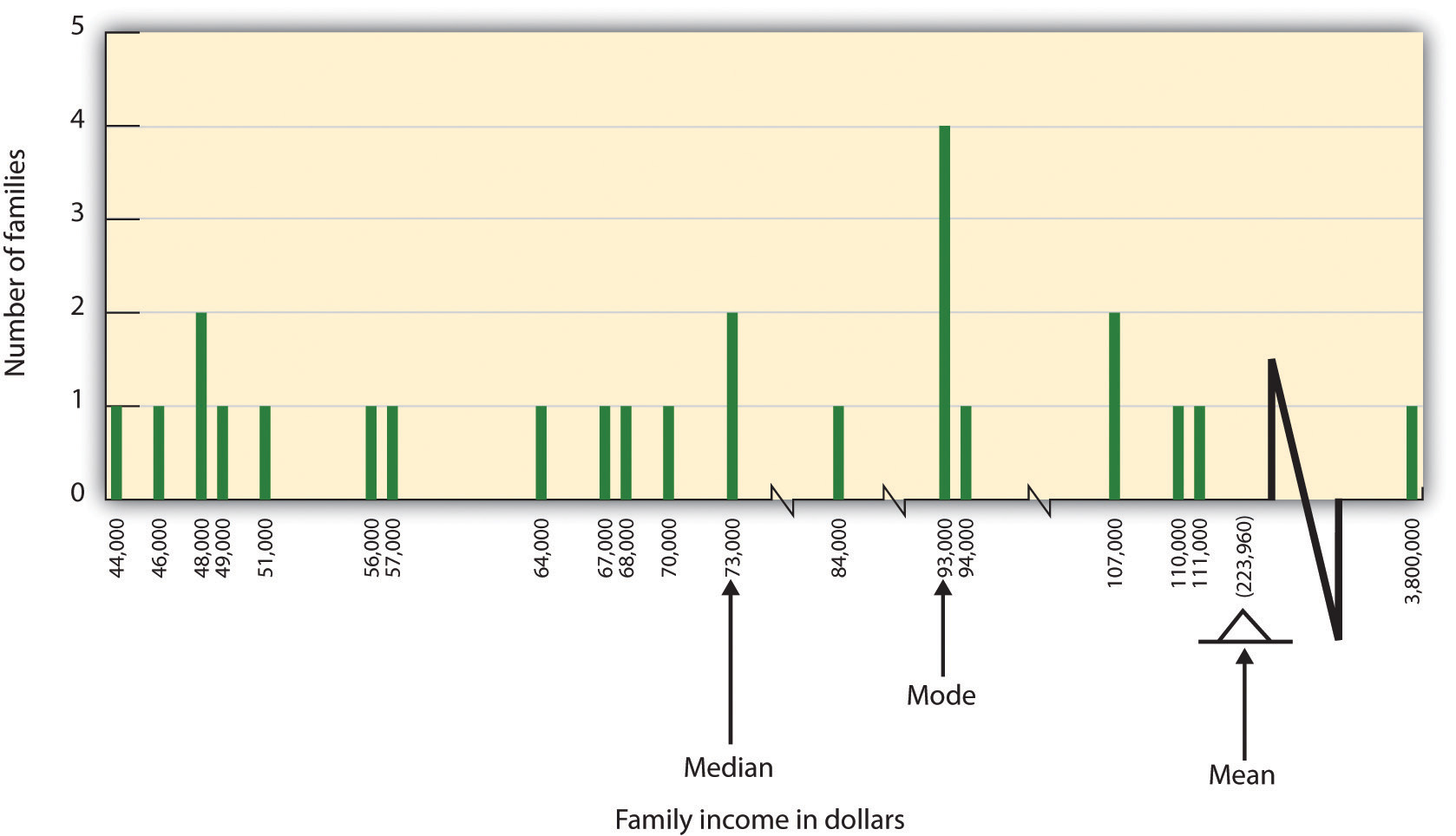

In some cases, however, the data distribution is not symmetrical. This occurs when there are one or more extreme scores (known as outliers) at one end of the distribution. Consider, for instance, the variable of family income (see Figure 3.6), which includes an outlier (a value of $3,800,000). In this case the mean is not a good measure of central tendency. Although it appears from Figure 3.6 that the central tendency of the family income variable should be around $70,000, the mean family income is actually $223,960. The single very extreme income has a disproportionate impact on the mean, resulting in a value that does not well represent the central tendency.

The median is used as an alternative measure of central tendency when distributions are not symmetrical. The median is the score in the center of the distribution, meaning that 50% of the scores are greater than the median and 50% of the scores are less than the median. In our case, the median household income ($73,000) is a much better indication of central tendency than is the mean household income ($223,960).

A final measure of central tendency, known as the mode, represents the value that occurs most frequently in the distribution. You can see from Figure 3.6 that the mode for the family income variable is $93,000 (it occurs four times).

In addition to summarizing the central tendency of a distribution, descriptive statistics convey information about how the scores of the variable are spread around the central tendency. Dispersion refers to the extent to which the scores are all tightly clustered around the central tendency, as seen in Figure 3.7.

Or they may be more spread out away from it, as seen in Figure 3.8.

One simple measure of dispersion is to find the largest (the maximum) and the smallest (the minimum) observed values of the variable and to compute the range of the variable as the maximum observed score minus the minimum observed score. You can check that the range of the height variable in Figure 3.5 is 72 – 62 = 10. The standard deviation, symbolized as s, is the most commonly used measure of dispersion. Distributions with a larger standard deviation have more spread. The standard deviation of the height variable is s = 2.74, and the standard deviation of the family income variable is s = $745,337.

An advantage of descriptive research is that it attempts to capture the complexity of everyday behaviour. Case studies provide detailed information about a single person or a small group of people, surveys capture the thoughts or reported behaviours of a large population of people, and naturalistic observation objectively records the behaviour of people or animals as it occurs naturally. Thus descriptive research is used to provide a relatively complete understanding of what is currently happening.

Despite these advantages, descriptive research has a distinct disadvantage in that, although it allows us to get an idea of what is currently happening, it is usually limited to static pictures. Although descriptions of particular experiences may be interesting, they are not always transferable to other individuals in other situations, nor do they tell us exactly why specific behaviours or events occurred. For instance, descriptions of individuals who have suffered a stressful event, such as a war or an earthquake, can be used to understand the individuals’ reactions to the event but cannot tell us anything about the long-term effects of the stress. And because there is no comparison group that did not experience the stressful situation, we cannot know what these individuals would be like if they hadn’t had the stressful experience.

Correlational Research: Seeking Relationships among Variables

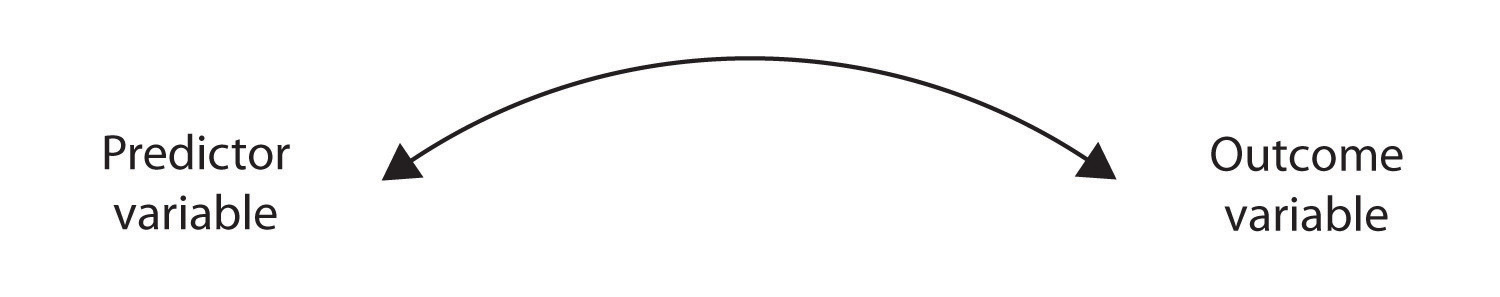

In contrast to descriptive research, which is designed primarily to provide static pictures, correlational research involves the measurement of two or more relevant variables and an assessment of the relationship between or among those variables. For instance, the variables of height and weight are systematically related (correlated) because taller people generally weigh more than shorter people. In the same way, study time and memory errors are also related, because the more time a person is given to study a list of words, the fewer errors he or she will make. When there are two variables in the research design, one of them is called the predictor variable and the other the outcome variable. The research design can be visualized as shown in Figure 3.9, where the curved arrow represents the expected correlation between these two variables.

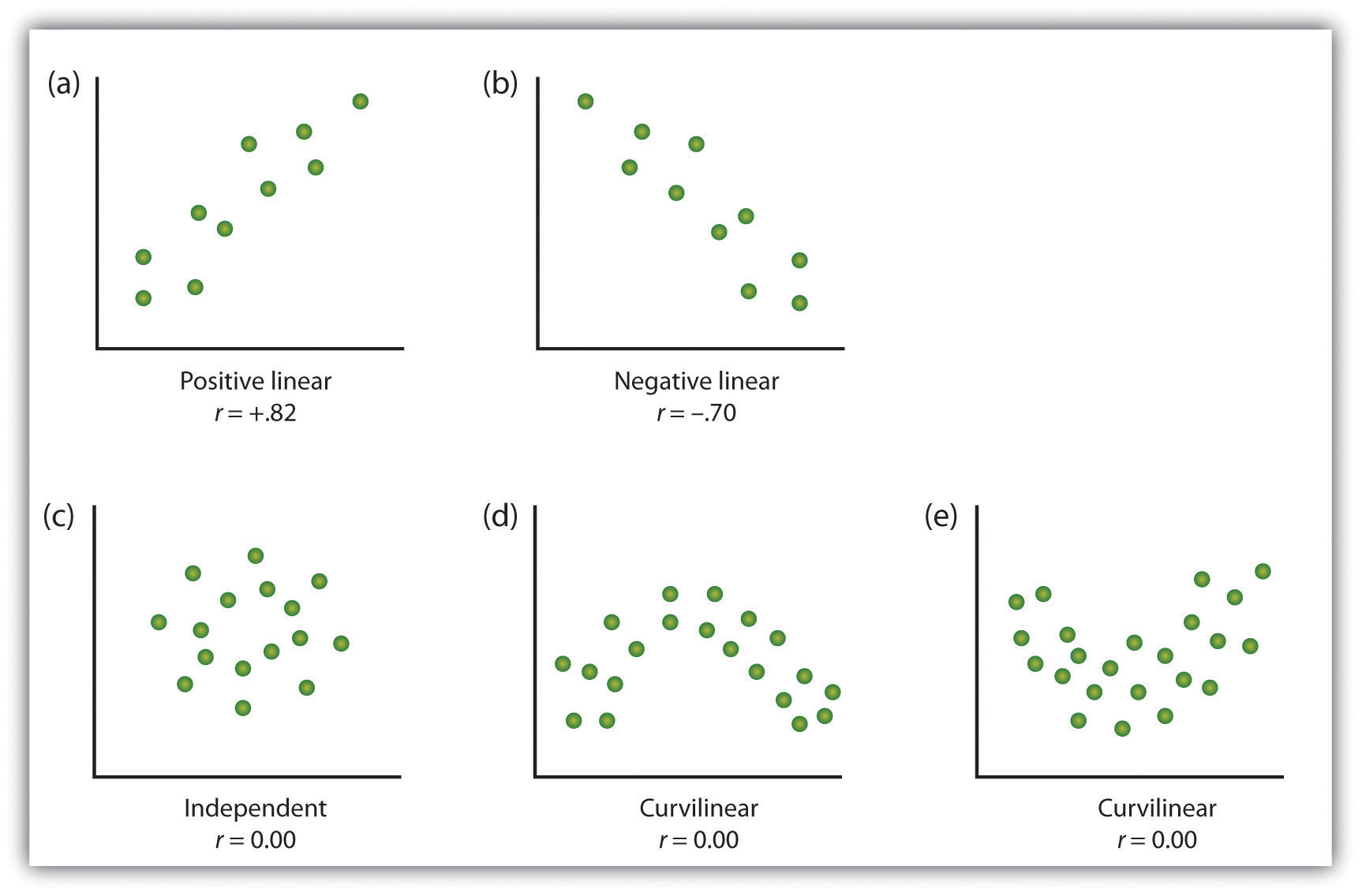

One way of organizing the data from a correlational study with two variables is to graph the values of each of the measured variables using a scatter plot. As you can see in Figure 3.10 a scatter plot is a visual image of the relationship between two variables. A point is plotted for each individual at the intersection of his or her scores for the two variables. When the association between the variables on the scatter plot can be easily approximated with a straight line, as in parts (a) and (b) of Figure 3.10 the variables are said to have a linear relationship.

When the straight line indicates that individuals who have above-average values for one variable also tend to have above-average values for the other variable, as in part (a), the relationship is said to be positive linear. Examples of positive linear relationships include those between height and weight, between education and income, and between age and mathematical abilities in children. In each case, people who score higher on one of the variables also tend to score higher on the other variable. Negative linear relationships, in contrast, as shown in part (b), occur when above-average values for one variable tend to be associated with below-average values for the other variable. Examples of negative linear relationships include those between the age of a child and the number of diapers the child uses, and between practice on and errors made on a learning task. In these cases, people who score higher on one of the variables tend to score lower on the other variable.

Relationships between variables that cannot be described with a straight line are known as nonlinear relationships. Part (c) of Figure 3.10 shows a common pattern in which the distribution of the points is essentially random. In this case there is no relationship at all between the two variables, and they are said to be independent. Parts (d) and (e) of Figure 3.10 show patterns of association in which, although there is an association, the points are not well described by a single straight line. For instance, part (d) shows the type of relationship that frequently occurs between anxiety and performance. Increases in anxiety from low to moderate levels are associated with performance increases, whereas increases in anxiety from moderate to high levels are associated with decreases in performance. Relationships that change in direction and thus are not described by a single straight line are called curvilinear relationships.

The most common statistical measure of the strength of linear relationships among variables is the Pearson correlation coefficient, which is symbolized by the letter r. The value of the correlation coefficient ranges from r = –1.00 to r = +1.00. The direction of the linear relationship is indicated by the sign of the correlation coefficient. Positive values of r (such as r= .54 or r = .67) indicate that the relationship is positive linear (i.e., the pattern of the dots on the scatter plot runs from the lower left to the upper right), whereas negative values of r (such as r = –.30 or r = –.72) indicate negative linear relationships (i.e., the dots run from the upper left to the lower right). The strength of the linear relationship is indexed by the distance of the correlation coefficient from zero (its absolute value). For instance, r = –.54 is a stronger relationship than r = .30, and r = .72 is a stronger relationship than r = –.57. Because the Pearson correlation coefficient only measures linear relationships, variables that have curvilinear relationships are not well described by r, and the observed correlation will be close to zero.

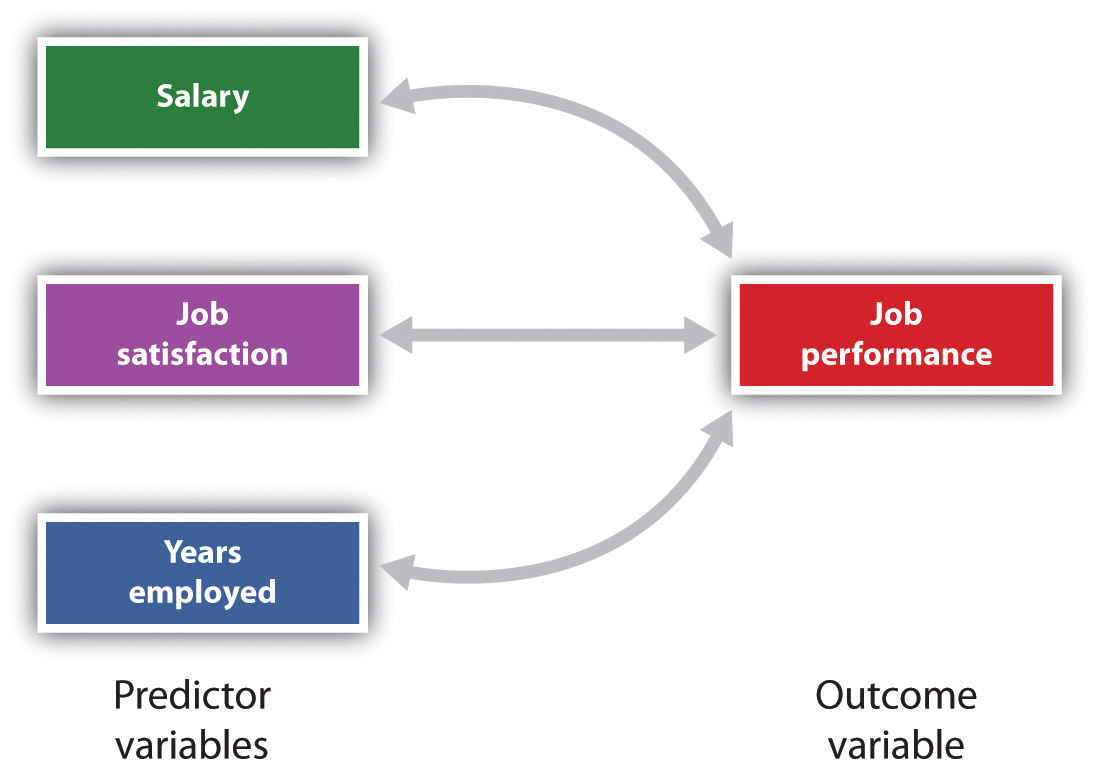

It is also possible to study relationships among more than two measures at the same time. A research design in which more than one predictor variable is used to predict a single outcome variable is analyzed through multiple regression (Aiken & West, 1991). Multiple regression is a statistical technique, based on correlation coefficients among variables, that allows predicting a single outcome variable from more than one predictor variable. For instance, Figure 3.11 shows a multiple regression analysis in which three predictor variables (Salary, job satisfaction, and years employed) are used to predict a single outcome (job performance). The use of multiple regression analysis shows an important advantage of correlational research designs — they can be used to make predictions about a person’s likely score on an outcome variable (e.g., job performance) based on knowledge of other variables.

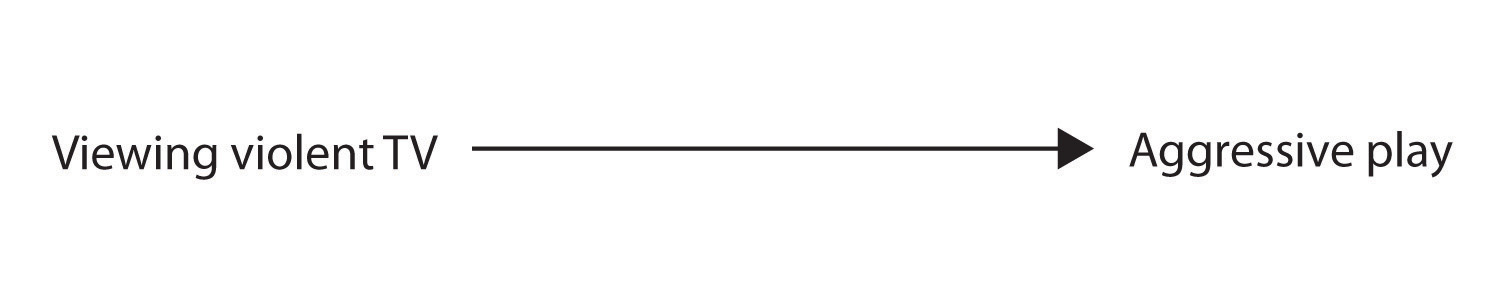

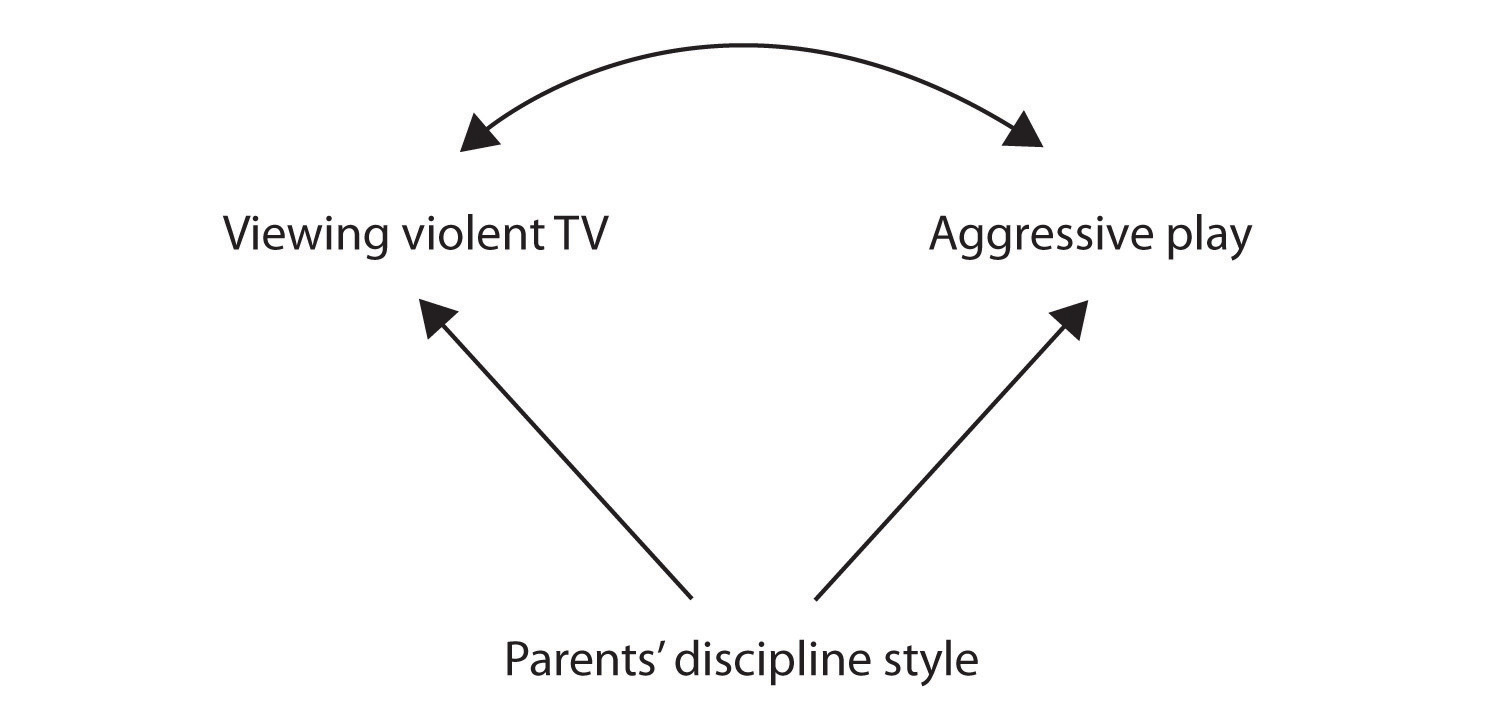

An important limitation of correlational research designs is that they cannot be used to draw conclusions about the causal relationships among the measured variables. Consider, for instance, a researcher who has hypothesized that viewing violent behaviour will cause increased aggressive play in children. He has collected, from a sample of Grade 4 children, a measure of how many violent television shows each child views during the week, as well as a measure of how aggressively each child plays on the school playground. From his collected data, the researcher discovers a positive correlation between the two measured variables.

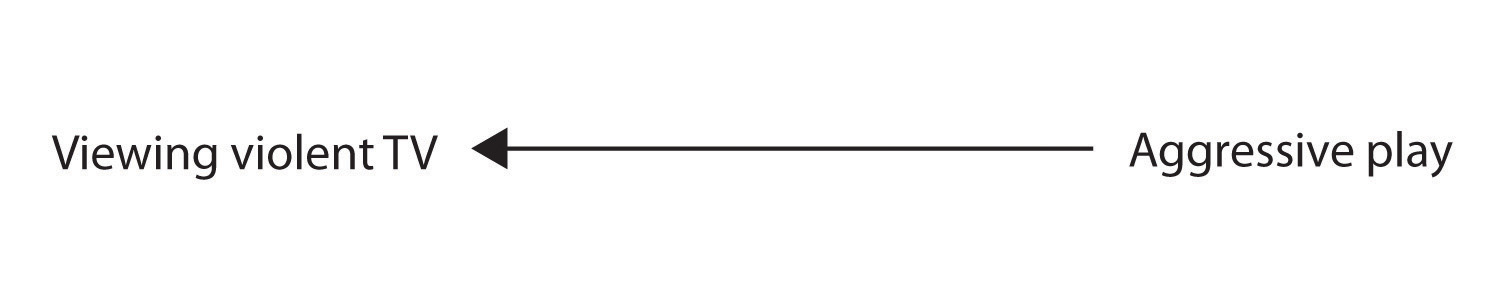

Although this positive correlation appears to support the researcher’s hypothesis, it cannot be taken to indicate that viewing violent television causes aggressive behaviour. Although the researcher is tempted to assume that viewing violent television causes aggressive play, there are other possibilities. One alternative possibility is that the causal direction is exactly opposite from what has been hypothesized. Perhaps children who have behaved aggressively at school develop residual excitement that leads them to want to watch violent television shows at home (Figure 3.13):

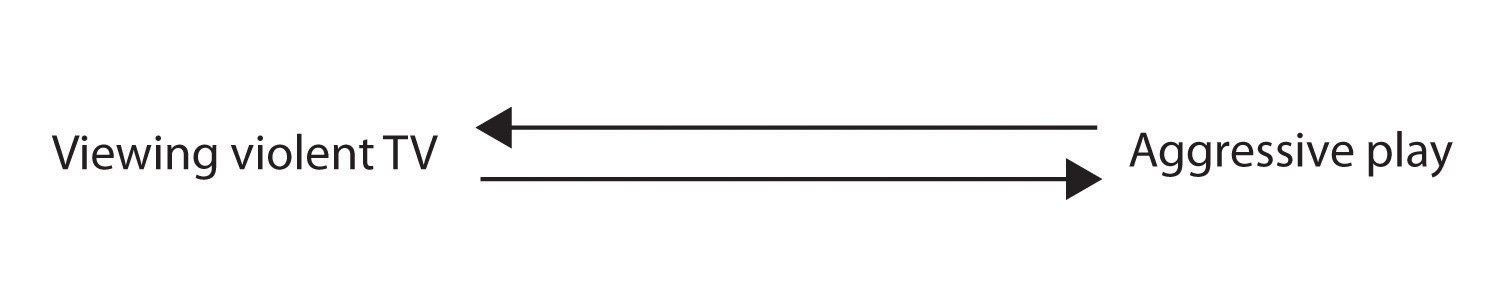

Although this possibility may seem less likely, there is no way to rule out the possibility of such reverse causation on the basis of this observed correlation. It is also possible that both causal directions are operating and that the two variables cause each other (Figure 3.14).

Still another possible explanation for the observed correlation is that it has been produced by the presence of a common-causal variable (also known as a third variable). A common-causal variable is a variable that is not part of the research hypothesis but that causes both the predictor and the outcome variable and thus produces the observed correlation between them. In our example, a potential common-causal variable is the discipline style of the children’s parents. Parents who use a harsh and punitive discipline style may produce children who like to watch violent television and who also behave aggressively in comparison to children whose parents use less harsh discipline (Figure 3.15)

In this case, television viewing and aggressive play would be positively correlated (as indicated by the curved arrow between them), even though neither one caused the other but they were both caused by the discipline style of the parents (the straight arrows). When the predictor and outcome variables are both caused by a common-causal variable, the observed relationship between them is said to be spurious. A spurious relationship is a relationship between two variables in which a common-causal variable produces and “explains away” the relationship. If effects of the common-causal variable were taken away, or controlled for, the relationship between the predictor and outcome variables would disappear. In the example, the relationship between aggression and television viewing might be spurious because by controlling for the effect of the parents’ disciplining style, the relationship between television viewing and aggressive behaviour might go away.

Common-causal variables in correlational research designs can be thought of as mystery variables because, as they have not been measured, their presence and identity are usually unknown to the researcher. Since it is not possible to measure every variable that could cause both the predictor and outcome variables, the existence of an unknown common-causal variable is always a possibility. For this reason, we are left with the basic limitation of correlational research: correlation does not demonstrate causation. It is important that when you read about correlational research projects, you keep in mind the possibility of spurious relationships, and be sure to interpret the findings appropriately. Although correlational research is sometimes reported as demonstrating causality without any mention being made of the possibility of reverse causation or common-causal variables, informed consumers of research, like you, are aware of these interpretational problems.

In sum, correlational research designs have both strengths and limitations. One strength is that they can be used when experimental research is not possible because the predictor variables cannot be manipulated. Correlational designs also have the advantage of allowing the researcher to study behaviour as it occurs in everyday life. And we can also use correlational designs to make predictions — for instance, to predict from the scores on their battery of tests the success of job trainees during a training session. But we cannot use such correlational information to determine whether the training caused better job performance. For that, researchers rely on experiments.

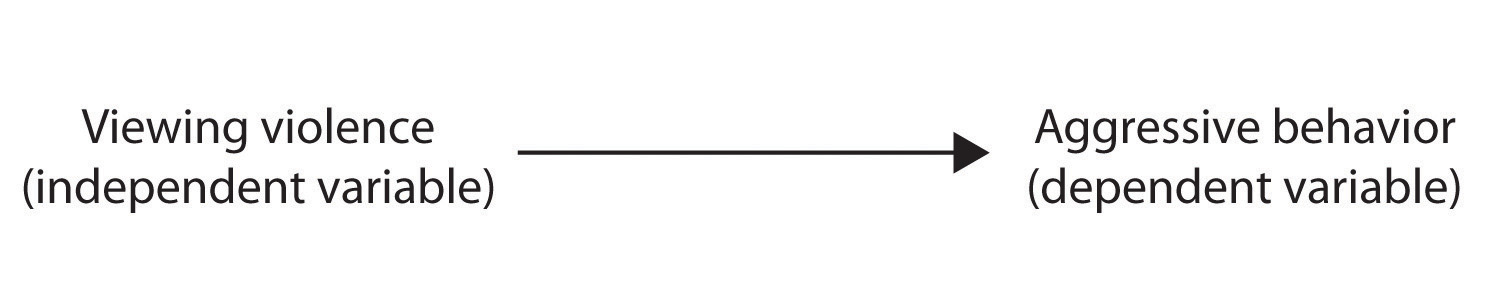

Experimental Research: Understanding the Causes of Behaviour

The goal of experimental research design is to provide more definitive conclusions about the causal relationships among the variables in the research hypothesis than is available from correlational designs. In an experimental research design, the variables of interest are called the independent variable (or variables) and the dependent variable. The independent variable in an experiment is the causing variable that is created (manipulated) by the experimenter. The dependent variable in an experiment is a measured variable that is expected to be influenced by the experimental manipulation. The research hypothesis suggests that the manipulated independent variable or variables will cause changes in the measured dependent variables. We can diagram the research hypothesis by using an arrow that points in one direction. This demonstrates the expected direction of causality (Figure 3.16):

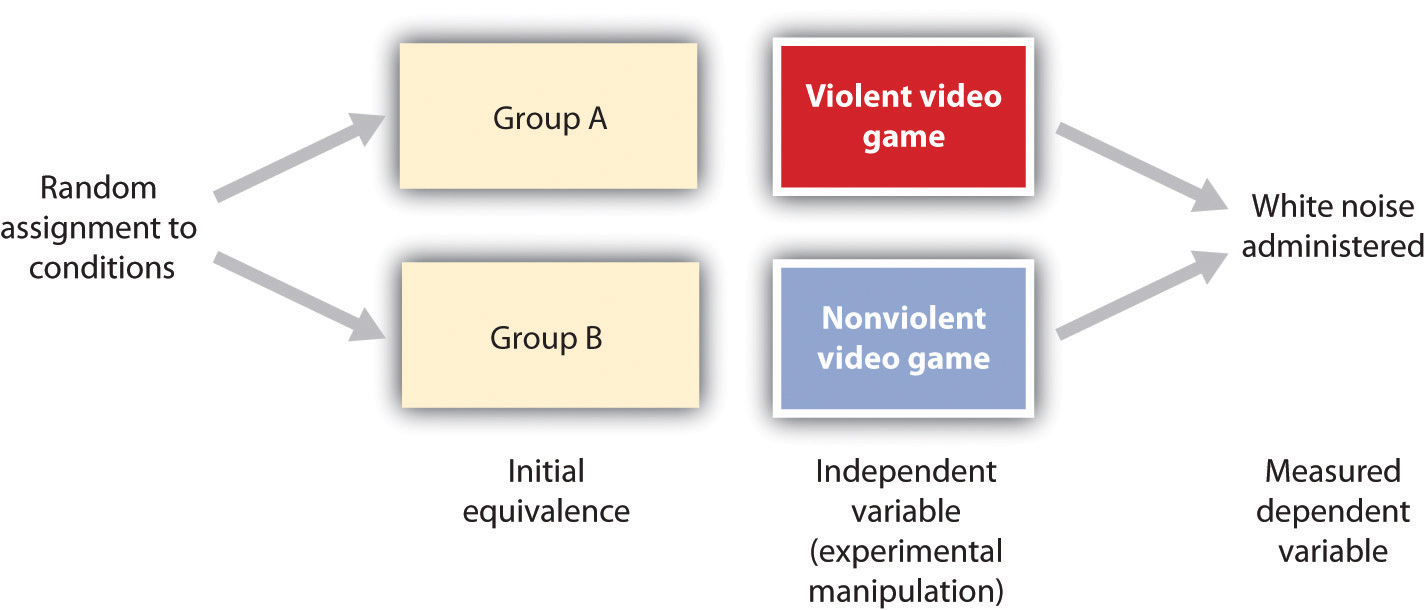

Research Focus: Video Games and Aggression

Consider an experiment conducted by Anderson and Dill (2000). The study was designed to test the hypothesis that viewing violent video games would increase aggressive behaviour. In this research, male and female undergraduates from Iowa State University were given a chance to play with either a violent video game (Wolfenstein 3D) or a nonviolent video game (Myst). During the experimental session, the participants played their assigned video games for 15 minutes. Then, after the play, each participant played a competitive game with an opponent in which the participant could deliver blasts of white noise through the earphones of the opponent. The operational definition of the dependent variable (aggressive behaviour) was the level and duration of noise delivered to the opponent. The design of the experiment is shown in Figure 3.17

Two advantages of the experimental research design are (a) the assurance that the independent variable (also known as the experimental manipulation) occurs prior to the measured dependent variable, and (b) the creation of initial equivalence between the conditions of the experiment (in this case by using random assignment to conditions).

Experimental designs have two very nice features. For one, they guarantee that the independent variable occurs prior to the measurement of the dependent variable. This eliminates the possibility of reverse causation. Second, the influence of common-causal variables is controlled, and thus eliminated, by creating initial equivalence among the participants in each of the experimental conditions before the manipulation occurs.

The most common method of creating equivalence among the experimental conditions is through random assignment to conditions, a procedure in which the condition that each participant is assigned to is determined through a random process, such as drawing numbers out of an envelope or using a random number table. Anderson and Dill first randomly assigned about 100 participants to each of their two groups (Group A and Group B). Because they used random assignment to conditions, they could be confident that, before the experimental manipulation occurred, the students in Group A were, on average, equivalent to the students in Group B on every possible variable, including variables that are likely to be related to aggression, such as parental discipline style, peer relationships, hormone levels, diet — and in fact everything else.

Then, after they had created initial equivalence, Anderson and Dill created the experimental manipulation — they had the participants in Group A play the violent game and the participants in Group B play the nonviolent game. Then they compared the dependent variable (the white noise blasts) between the two groups, finding that the students who had viewed the violent video game gave significantly longer noise blasts than did the students who had played the nonviolent game.

Anderson and Dill had from the outset created initial equivalence between the groups. This initial equivalence allowed them to observe differences in the white noise levels between the two groups after the experimental manipulation, leading to the conclusion that it was the independent variable (and not some other variable) that caused these differences. The idea is that the only thing that was different between the students in the two groups was the video game they had played.

Despite the advantage of determining causation, experiments do have limitations. One is that they are often conducted in laboratory situations rather than in the everyday lives of people. Therefore, we do not know whether results that we find in a laboratory setting will necessarily hold up in everyday life. Second, and more important, is that some of the most interesting and key social variables cannot be experimentally manipulated. If we want to study the influence of the size of a mob on the destructiveness of its behaviour, or to compare the personality characteristics of people who join suicide cults with those of people who do not join such cults, these relationships must be assessed using correlational designs, because it is simply not possible to experimentally manipulate these variables.

Key Takeaways

- Descriptive, correlational, and experimental research designs are used to collect and analyze data.

- Descriptive designs include case studies, surveys, and naturalistic observation. The goal of these designs is to get a picture of the current thoughts, feelings, or behaviours in a given group of people. Descriptive research is summarized using descriptive statistics.

- Correlational research designs measure two or more relevant variables and assess a relationship between or among them. The variables may be presented on a scatter plot to visually show the relationships. The Pearson Correlation Coefficient (r) is a measure of the strength of linear relationship between two variables.

- Common-causal variables may cause both the predictor and outcome variable in a correlational design, producing a spurious relationship. The possibility of common-causal variables makes it impossible to draw causal conclusions from correlational research designs.

- Experimental research involves the manipulation of an independent variable and the measurement of a dependent variable. Random assignment to conditions is normally used to create initial equivalence between the groups, allowing researchers to draw causal conclusions.

Exercises and Critical Thinking

- There is a negative correlation between the row that a student sits in in a large class (when the rows are numbered from front to back) and his or her final grade in the class. Do you think this represents a causal relationship or a spurious relationship, and why?

- Think of two variables (other than those mentioned in this book) that are likely to be correlated, but in which the correlation is probably spurious. What is the likely common-causal variable that is producing the relationship?

- Imagine a researcher wants to test the hypothesis that participating in psychotherapy will cause a decrease in reported anxiety. Describe the type of research design the investigator might use to draw this conclusion. What would be the independent and dependent variables in the research?

2.3 You Can Be an Informed Consumer of Psychological Research

Learning Objectives

- Outline the four potential threats to the validity of research and discuss how they may make it difficult to accurately interpret research findings.

- Describe how confounding may reduce the internal validity of an experiment.

- Explain how generalization, replication, and meta-analyses are used to assess the external validity of research findings.

Good research is valid research. When research is valid, the conclusions drawn by the researcher are legitimate. For instance, if a researcher concludes that participating in psychotherapy reduces anxiety, or that taller people are smarter than shorter people, the research is valid only if the therapy really works or if taller people really are smarter. Unfortunately, there are many threats to the validity of research, and these threats may sometimes lead to unwarranted conclusions. Often, and despite researchers’ best intentions, some of the research reported on websites as well as in newspapers, magazines, and even scientific journals is invalid. Validity is not an all-or-nothing proposition, which means that some research is more valid than other research. Only by understanding the potential threats to validity will you be able to make knowledgeable decisions about the conclusions that can or cannot be drawn from a research project. There are four major types of threats to the validity of research, and informed consumers of research are aware of each type.

Threats to the Validity of Research

- Threats to construct validity. Although it is claimed that the measured variables measure the conceptual variables of interest, they actually may not.

- Threats to statistical conclusion validity. Conclusions regarding the research may be incorrect because no statistical tests were made or because the statistical tests were incorrectly interpreted.

- Threats to internal validity. Although it is claimed that the independent variable caused the dependent variable, the dependent variable actually may have been caused by a confounding variable.

- Threats to external validity. Although it is claimed that the results are more general, the observed effects may actually only be found under limited conditions or for specific groups of people. (Stangor, 2011)

One threat to valid research occurs when there is a threat to construct validity. Construct validity refers to the extent to which the variables used in the research adequately assess the conceptual variables they were designed to measure. One requirement for construct validity is that the measure be reliable, where reliability refers to the consistency of a measured variable. A bathroom scale is usually reliable, because if we step on and off it a couple of times, the scale will consistently measure the same weight every time. Other measures, including some psychological tests, may be less reliable, and thus less useful.

Normally, we can assume that the researchers have done their best to assure the construct validity of their measures, but it is not inappropriate for you, as an informed consumer of research, to question this. It is always important to remember that the ability to learn about the relationship between the conceptual variables in a research hypothesis is dependent on the operational definitions of the measured variables. If the measures do not really measure the conceptual variables that they are designed to assess (e.g., if a supposed IQ test does not really measure intelligence), then they cannot be used to draw inferences about the relationship between the conceptual variables (Nunnally, 1978).

The statistical methods that scientists use to test their research hypotheses are based on probability estimates. You will see statements in research reports indicating that the results were statistically significant or not statistically significant. These statements will be accompanied by statistical tests, often including statements such as p < 0.05 or about confidence intervals. These statements describe the statistical significance of the data that have been collected. Statistical significance refers to the confidence with which a scientist can conclude that data are not due to chance or random error. When a researcher concludes that a result is statistically significant, he or she has determined that the observed data was very unlikely to have been caused by chance factors alone. Hence, there is likely a real relationship between or among the variables in the research design. Otherwise, the researcher concludes that the results were not statistically significant.

Statistical conclusion validity refers to the extent to which we can be certain that the researcher has drawn accurate conclusions about the statistical significance of the research. Research will be invalid if the conclusions made about the research hypothesis are incorrect because statistical inferences about the collected data are in error. These errors can occur either because the scientist inappropriately infers that the data do support the research hypothesis when in fact they are due to chance, or when the researcher mistakenly fails to find support for the research hypothesis. Normally, we can assume that the researchers have done their best to ensure the statistical conclusion validity of a research design, but we must always keep in mind that inferences about data are probabilistic and never certain — this is why research never proves a theory.

Internal validity refers to the extent to which we can trust the conclusions that have been drawn about the causal relationship between the independent and dependent variables(Campbell & Stanley, 1963). Internal validity applies primarily to experimental research designs, in which the researcher hopes to conclude that the independent variable has caused the dependent variable. Internal validity is maximized when the research is free from the presence of confounding variables — variables other than the independent variable on which the participants in one experimental condition differ systematically from those in other conditions.

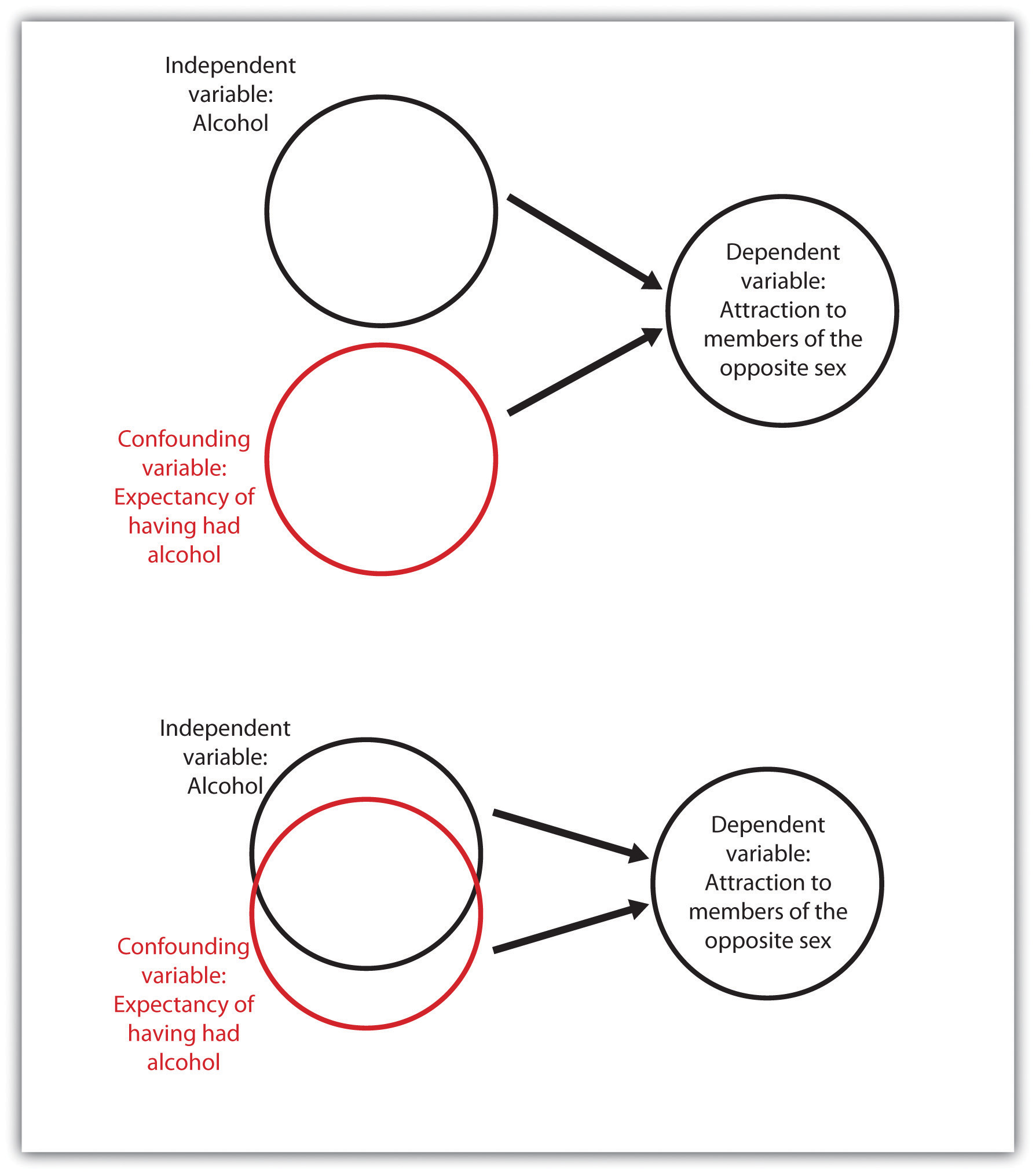

Consider an experiment in which a researcher tested the hypothesis that drinking alcohol makes members of the opposite sex look more attractive. Participants older than 21 years of age were randomly assigned to drink either orange juice mixed with vodka or orange juice alone. To eliminate the need for deception, the participants were told whether or not their drinks contained vodka. After enough time had passed for the alcohol to take effect, the participants were asked to rate the attractiveness of pictures of members of the opposite sex. The results of the experiment showed that, as predicted, the participants who drank the vodka rated the photos as significantly more attractive.

If you think about this experiment for a minute, it may occur to you that although the researcher wanted to draw the conclusion that the alcohol caused the differences in perceived attractiveness, the expectation of having consumed alcohol is confounded with the presence of alcohol. That is, the people who drank alcohol also knew they drank alcohol, and those who did not drink alcohol knew they did not. It is possible that simply knowing that they were drinking alcohol, rather than the effect of the alcohol itself, may have caused the differences (see Figure 3.18, “An Example of Confounding”). One solution to the problem of potential expectancy effects is to tell both groups that they are drinking orange juice and vodka but really give alcohol to only half of the participants (it is possible to do this because vodka has very little smell or taste). If differences in perceived attractiveness are found, the experimenter could then confidently attribute them to the alcohol rather than to the expectancy about having consumed alcohol.

Another threat to internal validity can occur when the experimenter knows the research hypothesis and also knows which experimental condition the participants are in. The outcome is the potential for experimenter bias, a situation in which the experimenter subtly treats the research participants in the various experimental conditions differently, resulting in an invalid confirmation of the research hypothesis. In one study demonstrating experimenter bias, Rosenthal and Fode (1963) sent 12 students to test a research hypothesis concerning maze learning in rats. Although it was not initially revealed to the students, they were actually the participants in an experiment. Six of the students were randomly told that the rats they would be testing had been bred to be highly intelligent, whereas the other six students were led to believe that the rats had been bred to be unintelligent. In reality there were no differences among the rats given to the two groups of students. When the students returned with their data, a startling result emerged. The rats run by students who expected them to be intelligent showed significantly better maze learning than the rats run by students who expected them to be unintelligent. Somehow the students’ expectations influenced their data. They evidently did something different when they tested the rats, perhaps subtly changing how they timed the maze running or how they treated the rats. And this experimenter bias probably occurred entirely out of their awareness.

To avoid experimenter bias, researchers frequently run experiments in which the researchers are blind to condition. This means that although the experimenters know the research hypotheses, they do not know which conditions the participants are assigned to. Experimenter bias cannot occur if the researcher is blind to condition. In a double-blind experiment, both the researcher and the research participants are blind to condition. For instance, in a double-blind trial of a drug, the researcher does not know whether the drug being given is the real drug or the ineffective placebo, and the patients also do not know which they are getting. Double-blind experiments eliminate the potential for experimenter effects and at the same time eliminate participant expectancy effects.

While internal validity refers to conclusions drawn about events that occurred within the experiment, external validity refers to the extent to which the results of a research design can be generalized beyond the specific way the original experiment was conducted. Generalization refers to the extent to which relationships among conceptual variables can be demonstrated in a wide variety of people and a wide variety of manipulated or measured variables.

Psychologists who use university students as participants in their research may be concerned about generalization, wondering if their research will generalize to people who are not college students. And researchers who study the behaviours of employees in one company may wonder whether the same findings would translate to other companies. Whenever there is reason to suspect that a result found for one sample of participants would not hold up for another sample, then research may be conducted with these other populations to test for generalization.

Recently, many psychologists have been interested in testing hypotheses about the extent to which a result will replicate across people from different cultures (Heine, 2010). For instance, a researcher might test whether the effects on aggression of viewing violent video games are the same for Japanese children as they are for Canadian children by showing violent and nonviolent films to a sample of both Japanese and Canadian schoolchildren. If the results are the same in both cultures, then we say that the results have generalized, but if they are different, then we have learned a limiting condition of the effect (see Table 3.4, “A Cross-Cultural Replication”).

| Canada | Japan | Gaming behaviour |

|---|---|---|

| More aggressive behaviour observed | ??? | Violent Games |

| Less aggressive behaviour observed | ??? | Nonviolent Games |

In a cross-cultural replication, external validity is observed if the same effects that have been found in one culture are replicated in another culture. If they are not replicated in the new culture, then a limiting condition of the original results is found.

Unless the researcher has a specific reason to believe that generalization will not hold, it is appropriate to assume that a result found in one population (even if that population is college or university students) will generalize to other populations. Because the investigator can never demonstrate that the research results generalize to all populations, it is not expected that the researcher will attempt to do so. Rather, the burden of proof rests on those who claim that a result will not generalize.

Because any single test of a research hypothesis will always be limited in terms of what it can show, important advances in science are never the result of a single research project. Advances occur through the accumulation of knowledge that comes from many different tests of the same theory or research hypothesis. These tests are conducted by different researchers using different research designs, participants, and operationalizations of the independent and dependent variables. The process of repeating previous research, which forms the basis of all scientific inquiry, is known as replication.

Scientists often use a procedure known as meta-analysis to summarize replications of research findings. A meta-analysis is a statistical technique that uses the results of existing studies to integrate and draw conclusions about those studies. Because meta-analyses provide so much information, they are very popular and useful ways of summarizing research literature.

A meta-analysis provides a relatively objective method of reviewing research findings because it (a) specifies inclusion criteria that indicate exactly which studies will or will not be included in the analysis, (b) systematically searches for all studies that meet the inclusion criteria, and (c) provides an objective measure of the strength of observed relationships. Frequently, the researchers also include — if they can find them — studies that have not been published in journals.

Psychology in Everyday Life: Critically Evaluating the Validity of Websites

The validity of research reports published in scientific journals is likely to be high because the hypotheses, methods, results, and conclusions of the research have been rigorously evaluated by other scientists, through peer review, before the research was published. For this reason, you will want to use peer-reviewed journal articles as your major source of information about psychological research.

Although research articles are the gold standard for validity, you may also need and desire to get at least some information from other sources. The Internet is a vast source of information from which you can learn about almost anything, including psychology. Search engines — such as Google or Yahoo! — bring hundreds or thousands of hits on a topic, and online encyclopedias, such as Wikipedia, provide articles about relevant topics.

Although you will naturally use the web to help you find information about fields such as psychology, you must also realize that it is important to carefully evaluate the validity of the information you get from the web. You must try to distinguish information that is based on empirical research from information that is based on opinion, and between valid and invalid data. The following material may be helpful to you in learning to make these distinctions.

The techniques for evaluating the validity of websites are similar to those that are applied to evaluating any other source of information. Ask first about the source of the information. Is the domain a “.com” or “.ca” (business), “.gov” (government), or “.org” (nonprofit) entity? This information can help you determine the author’s (or organization’s) purpose in publishing the website. Try to determine where the information is coming from. Is the data being summarized from objective sources, such as journal articles or academic or government agencies? Does it seem that the author is interpreting the information as objectively as possible, or is the data being interpreted to support a particular point of view? Consider what groups, individuals, and political or commercial interests stand to gain from the site. Is the website potentially part of an advocacy group whose web pages reflect the particular positions of the group? Material from any group’s site may be useful, but try to be aware of the group’s purposes and potential biases.

Also, ask whether or not the authors themselves appear to be a trustworthy source of information. Do they hold positions in an academic institution? Do they have peer-reviewed publications in scientific journals? Many useful web pages appear as part of organizational sites and reflect the work of that organization. You can be more certain of the validity of the information if it is sponsored by a professional organization, such as the Canadian Psychological Association or the Canadian Mental Health Association.

Try to check on the accuracy of the material and discern whether the sources of information seem current. Is the information cited so that you can read it in its original form? Reputable websites will probably link to other reputable sources, such as journal articles and scholarly books. Try to check the accuracy of the information by reading at least some of these sources yourself.

It is fair to say that all authors, researchers, and organizations have at least some bias and that the information from any site can be invalid. But good material attempts to be fair by acknowledging other possible positions, interpretations, or conclusions. A critical examination of the nature of the websites you browse for information will help you determine if the information is valid and will give you more confidence in the information you take from it.

Key Takeaways

- Research is said to be valid when the conclusions drawn by the researcher are legitimate. Because all research has the potential to be invalid, no research ever “proves” a theory or research hypothesis.

- Construct validity, statistical conclusion validity, internal validity, and external validity are all types of validity that people who read and interpret research need to be aware of.

- Construct validity refers to the assurance that the measured variables adequately measure the conceptual variables.

- Statistical conclusion validity refers to the assurance that inferences about statistical significance are appropriate.

- Internal validity refers to the assurance that the independent variable has caused the dependent variable. Internal validity is greater when confounding variables are reduced or eliminated.

- External validity is greater when effects can be replicated across different manipulations, measures, and populations. Scientists use meta-analyses to better understand the external validity of research.

Exercises and Critical Thinking

- The Pepsi-Cola Company, now PepsiCo Inc., conducted the “Pepsi Challenge” by randomly assigning individuals to taste either a Pepsi or a Coke. The researchers labelled the glasses with only an “M” (for Pepsi) or a “Q” (for Coke) and asked the participants to rate how much they liked the beverage. The research showed that subjects overwhelmingly preferred glass “M” over glass “Q,” and the researchers concluded that Pepsi was preferred to Coke. Can you tell what confounding variable is present in this research design? How would you redesign the research to eliminate the confound?

- Locate a research report of a meta-analysis. Determine the criteria that were used to select the studies and report on the findings of the research.

References

Campbell, D. T., & Stanley, J. C. (1963). Experimental and quasi-experimental designs for research. Chicago: Rand McNally.

Heine, S. J. (2010). Cultural psychology. In S. T. Fiske, D. T. Gilbert, & G. Lindzey (Eds.), Handbook of social psychology (5th ed., Vol. 2, pp. 1423–1464). Hoboken, NJ: John Wiley & Sons.

Nunnally, J. C. (1978). Pyschometric theory. New York, NY: McGraw-Hill.

Rosenthal, R., & Fode, K. L. (1963). The effect of experimenter bias on the performance of the albino rat. Behavioral Science, 8, 183–189.

Stangor, C. (2011). Research methods for the behavioral sciences (4th ed.). Mountain View, CA: Cengage.

- Adapted by J. Walinga. ↵

Image Attributions

Figure 3.4: “Reading newspaper” by Alaskan Dude (http://commons.wikimedia.org/wiki/File:Reading_newspaper.jpg) is licensed under CC BY 2.0

References

Aiken, L., & West, S. (1991). Multiple regression: Testing and interpreting interactions. Newbury Park, CA: Sage.

Ainsworth, M. S., Blehar, M. C., Waters, E., & Wall, S. (1978). Patterns of attachment: A psychological study of the strange situation. Hillsdale, NJ: Lawrence Erlbaum Associates.

Anderson, C. A., & Dill, K. E. (2000). Video games and aggressive thoughts, feelings, and behavior in the laboratory and in life. Journal of Personality and Social Psychology, 78(4), 772–790.

Damasio, H., Grabowski, T., Frank, R., Galaburda, A. M., Damasio, A. R., Cacioppo, J. T., & Berntson, G. G. (2005). The return of Phineas Gage: Clues about the brain from the skull of a famous patient. In Social neuroscience: Key readings. (pp. 21–28). New York, NY: Psychology Press.

Freud, S. (1909/1964). Analysis of phobia in a five-year-old boy. In E. A. Southwell & M. Merbaum (Eds.), Personality: Readings in theory and research (pp. 3–32). Belmont, CA: Wadsworth. (Original work published 1909).

Kotowicz, Z. (2007). The strange case of Phineas Gage. History of the Human Sciences, 20(1), 115–131.

Rokeach, M. (1964). The three Christs of Ypsilanti: A psychological study. New York, NY: Knopf.

Stangor, C. (2011). Research methods for the behavioural sciences (4th ed.). Mountain View, CA: Cengage.

Long Descriptions

Figure 3.6 long description: There are 25 families. 24 families have an income between $44,000 and $111,000 and one family has an income of $3,800,000. The mean income is $223,960 while the median income is $73,000. [Return to Figure 3.6]

Figure 3.10 long description: Types of scatter plots.

- Positive linear, r=positive .82. The plots on the graph form a rough line that runs from lower left to upper right.

- Negative linear, r=negative .70. The plots on the graph form a rough line that runs from upper left to lower right.

- Independent, r=0.00. The plots on the graph are spread out around the centre.

- Curvilinear, r=0.00. The plots of the graph form a rough line that goes up and then down like a hill.

- Curvilinear, r=0.00. The plots on the graph for a rough line that goes down and then up like a ditch.

References

Alexander, P. A., & Winne, P. H. (Eds.). (2006). Handbook of educational psychology (2nd ed.). Mahwah, NJ: Lawrence Erlbaum Associates.

Borum, R. (2004). Psychology of terrorism. Tampa: University of South Florida.

Brown v. Board of Education. (1954). 347 U.S, 483.

DePaulo, B. M., Lindsay, J. J., Malone, B. E., Muhlenbruck, L., Charlton, K., & Cooper, H. (2003). Cues to deception. Psychological Bulletin, 129(1), 74–118.

Fajen, B. R., & Warren, W. H. (2003). Behavioral dynamics of steering, obstacle avoidance, and route selection. Journal of Experimental Psychology: Human Perception and Performance, 29(2), 343–362.

Fiske, S. T., Bersoff, D. N., Borgida, E., Deaux, K., & Heilman, M. E. (1991). Social science research on trial: Use of sex stereotyping research in Price Waterhouse v. Hopkins. American Psychologist, 46(10), 1049–1060.

Lewin, K. (1999). The complete social scientist: A Kurt Lewin reader (M. Gold, Ed.). Washington, DC: American Psychological Association.

Saxe, L., Dougherty, D., & Cross, T. (1985). The validity of polygraph testing: Scientific analysis and public controversy. American Psychologist, 40, 355–366.

Woolfolk-Hoy, A. E. (2005). Educational psychology (9th ed.). Boston, MA: Allyn & Bacon.